Routing model

Each agent uses its own model configuration to decide where its LLM requests go.| Agent model configuration | Request route |

|---|---|

passthrough: true | Direct to that agent’s configured base_url |

passthrough: false or unset | Tracecat’s managed LiteLLM gateway |

/v1/messages. This prevents doubled paths such as /v1/v1/messages.

Root agents

For a root agent, Tracecat keys direct passthrough routing by the model string the root agent sends.model: customer-alias go directly to https://customer-litellm.example.

Subagents

For a subagent, Tracecat keys direct passthrough routing by the subagent’s scoped model route. This lets Tracecat route each preset agent independently, even when several agents share the same sandbox process.https://child-litellm.example. Requests for other subagents fall back to managed LiteLLM unless those subagents also enable passthrough.

Credentials

Tracecat resolves passthrough credentials from the custom provider selected by the agent’s model configuration. If a root agent and subagent use different passthrough providers, each route uses its own provider credentials. Managed LiteLLM requests keep the sandbox’s managed gateway token.Add a custom source

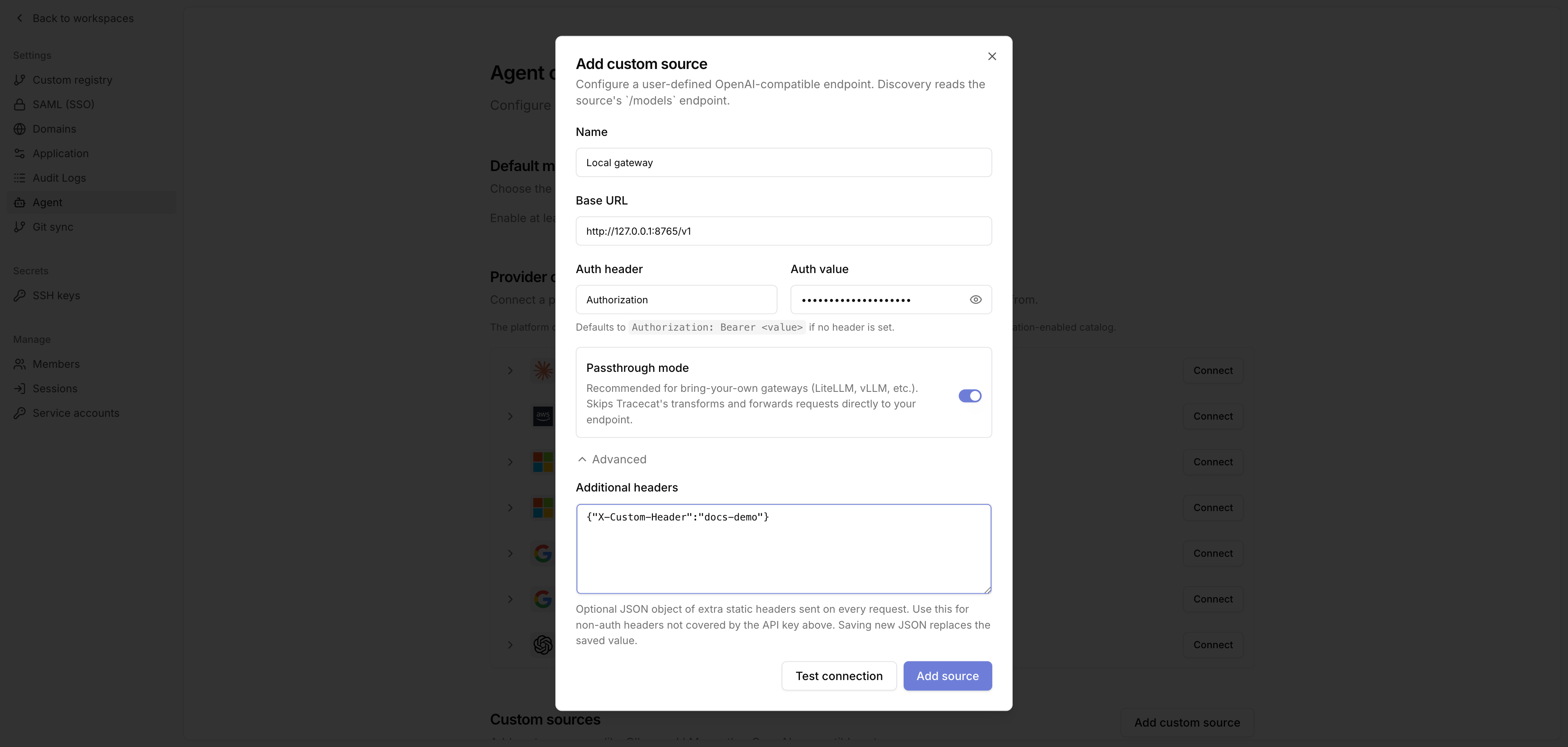

Go to Organization settings, open Agent, then go to Custom sources and click Add custom source.

- Name: A readable label, such as

Local gateway. - Base URL: The OpenAI-compatible API base URL, e.g.

https://gateway.example/v1. - Auth header: Leave blank to use

Authorization. - Auth value: The provider credential. If Auth header is blank, Tracecat sends

Authorization: Bearer <value>. - Passthrough mode: Enable this to route agent requests directly to this gateway instead of Tracecat’s managed LiteLLM. Required for bring-your-own gateways such as LiteLLM, vLLM, and Ollama-compatible endpoints.

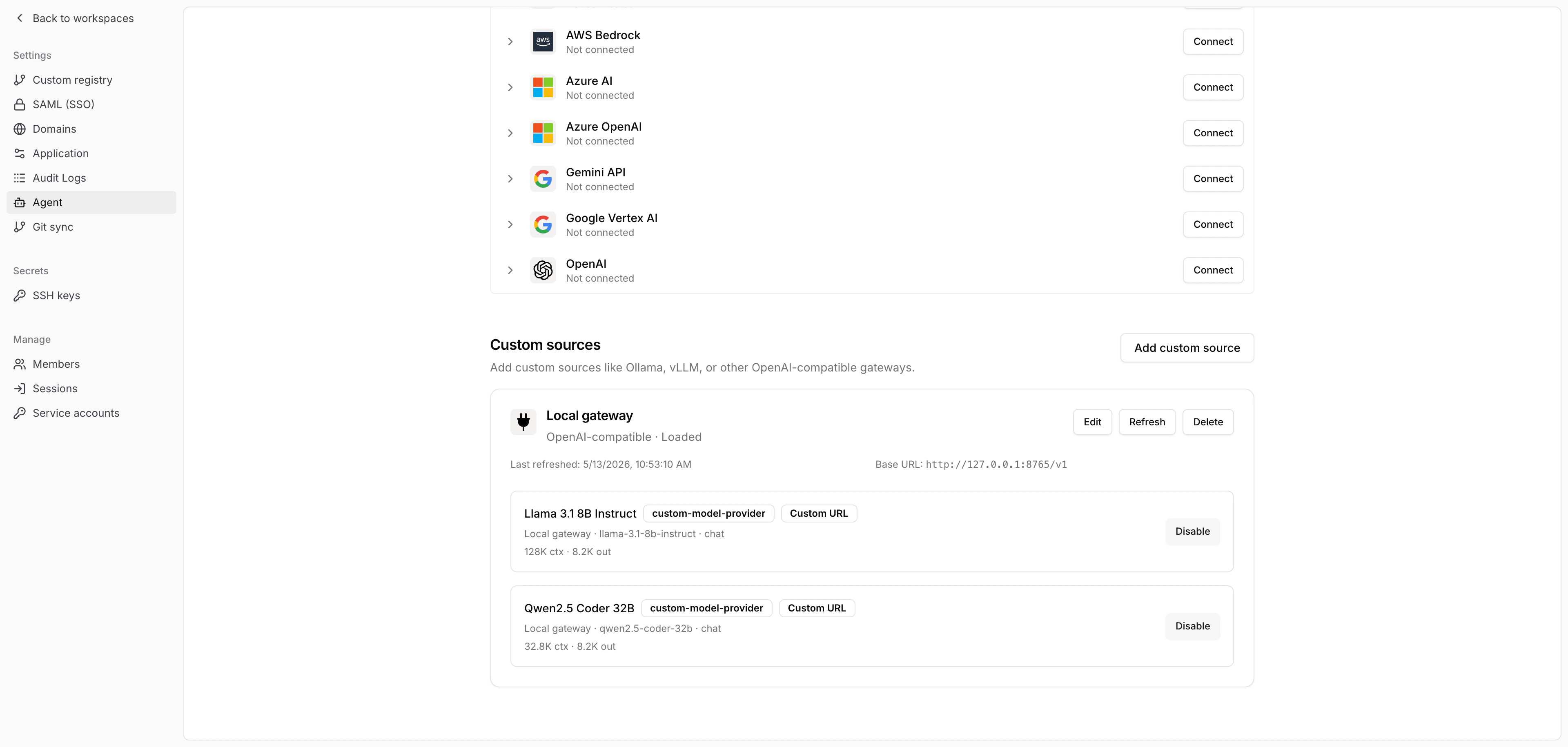

Discover and enable models

After you save the source, click Refresh. Tracecat calls the source’s/models endpoint and adds the returned models to the organization catalog.

Use the provider

Choose the enabled custom source model anywhere Tracecat lets you select an agent model, such as the default model selector or a preset agent configuration. Refresh the source again after you add or remove models from the upstream gateway. Edit the source when you rotate credentials, change the base URL, or update static headers.Related pages

- See AI agent for the

ai.agentandai.preset_agentaction reference. - See AI action for single-call LLM workflow actions.

- See Secrets and variables for agent secret handling.

- See MCP servers for connecting MCP tools to agents.